Can Prompt Templates Reduce Hallucinations

Can Prompt Templates Reduce Hallucinations - There are a few possible ways to approach the task of answering this question, depending on how literal or creative one wants to be. When researchers tested the method they. Eliminating hallucinations entirely would imply creating an information black hole—a system where infinite information can be stored within a finite model and retrieved. Based around the idea of grounding the model to a trusted datasource. They work by guiding the ai’s reasoning process, ensuring that outputs are accurate, logically consistent, and grounded in reliable. “according to…” prompting based around the idea of grounding the model to a trusted datasource. Provide clear and specific prompts. Explore emotional prompts and expertprompting to. The first step in minimizing ai hallucination is. Here are some examples of possible. Here are three templates you can use on the prompt level to reduce them. Fortunately, there are techniques you can use to get more reliable output from an ai model. As a user of these generative models, we can reduce the hallucinatory or confabulatory responses by writing better prompts, i.e., hallucination resistant prompts. To harness the potential of ai effectively, it is crucial to mitigate hallucinations. Eliminating hallucinations entirely would imply creating an information black hole—a system where infinite information can be stored within a finite model and retrieved. Here are three templates you can use on the prompt level to reduce them. Provide clear and specific prompts. By adapting prompting techniques and carefully integrating external tools, developers can improve the. Mastering prompt engineering translates to businesses being able to fully harness ai’s capabilities, reaping the benefits of its vast knowledge while sidestepping the pitfalls of. Explore emotional prompts and expertprompting to. Eliminating hallucinations entirely would imply creating an information black hole—a system where infinite information can be stored within a finite model and retrieved. “according to…” prompting based around the idea of grounding the model to a trusted datasource. They work by guiding the ai’s reasoning. Fortunately, there are techniques you can use to get more reliable output from an ai. Here are some examples of possible. Based around the idea of grounding the model to a trusted datasource. Here are three templates you can use on the prompt level to reduce them. There are a few possible ways to approach the task of answering this question, depending on how literal or creative one wants to be. As a user of. There are a few possible ways to approach the task of answering this question, depending on how literal or creative one wants to be. When researchers tested the method they. They work by guiding the ai’s reasoning. The first step in minimizing ai hallucination is. Mastering prompt engineering translates to businesses being able to fully harness ai’s capabilities, reaping the. Eliminating hallucinations entirely would imply creating an information black hole—a system where infinite information can be stored within a finite model and retrieved. Here are three templates you can use on the prompt level to reduce them. Here are some examples of possible. There are a few possible ways to approach the task of answering this question, depending on how. By adapting prompting techniques and carefully integrating external tools, developers can improve the. This article delves into six prompting techniques that can help reduce ai hallucination,. To harness the potential of ai effectively, it is crucial to mitigate hallucinations. Eliminating hallucinations entirely would imply creating an information black hole—a system where infinite information can be stored within a finite model. “according to…” prompting based around the idea of grounding the model to a trusted datasource. Provide clear and specific prompts. Explore emotional prompts and expertprompting to. To harness the potential of ai effectively, it is crucial to mitigate hallucinations. When researchers tested the method they. To harness the potential of ai effectively, it is crucial to mitigate hallucinations. “according to…” prompting based around the idea of grounding the model to a trusted datasource. This article delves into six prompting techniques that can help reduce ai hallucination,. Dive into our blog for advanced strategies like thot, con, and cove to minimize hallucinations in rag applications. By. The first step in minimizing ai hallucination is. They work by guiding the ai’s reasoning process, ensuring that outputs are accurate, logically consistent, and grounded in reliable. “according to…” prompting based around the idea of grounding the model to a trusted datasource. Mastering prompt engineering translates to businesses being able to fully harness ai’s capabilities, reaping the benefits of its. To harness the potential of ai effectively, it is crucial to mitigate hallucinations. Here are some examples of possible. By adapting prompting techniques and carefully integrating external tools, developers can improve the. The first step in minimizing ai hallucination is. They work by guiding the ai’s reasoning process, ensuring that outputs are accurate, logically consistent, and grounded in reliable. As a user of these generative models, we can reduce the hallucinatory or confabulatory responses by writing better prompts, i.e., hallucination resistant prompts. By adapting prompting techniques and carefully integrating external tools, developers can improve the. They work by guiding the ai’s reasoning process, ensuring that outputs are accurate, logically consistent, and grounded in reliable. To harness the potential of. Here are three templates you can use on the prompt level to reduce them. As a user of these generative models, we can reduce the hallucinatory or confabulatory responses by writing better prompts, i.e., hallucination resistant prompts. Eliminating hallucinations entirely would imply creating an information black hole—a system where infinite information can be stored within a finite model and retrieved. Dive into our blog for advanced strategies like thot, con, and cove to minimize hallucinations in rag applications. Fortunately, there are techniques you can use to get more reliable output from an ai model. Explore emotional prompts and expertprompting to. “according to…” prompting based around the idea of grounding the model to a trusted datasource. When researchers tested the method they. By adapting prompting techniques and carefully integrating external tools, developers can improve the. Provide clear and specific prompts. To harness the potential of ai effectively, it is crucial to mitigate hallucinations. They work by guiding the ai’s reasoning. The first step in minimizing ai hallucination is. Here are some examples of possible. There are a few possible ways to approach the task of answering this question, depending on how literal or creative one wants to be. Based around the idea of grounding the model to a trusted datasource.Prompt engineering methods that reduce hallucinations

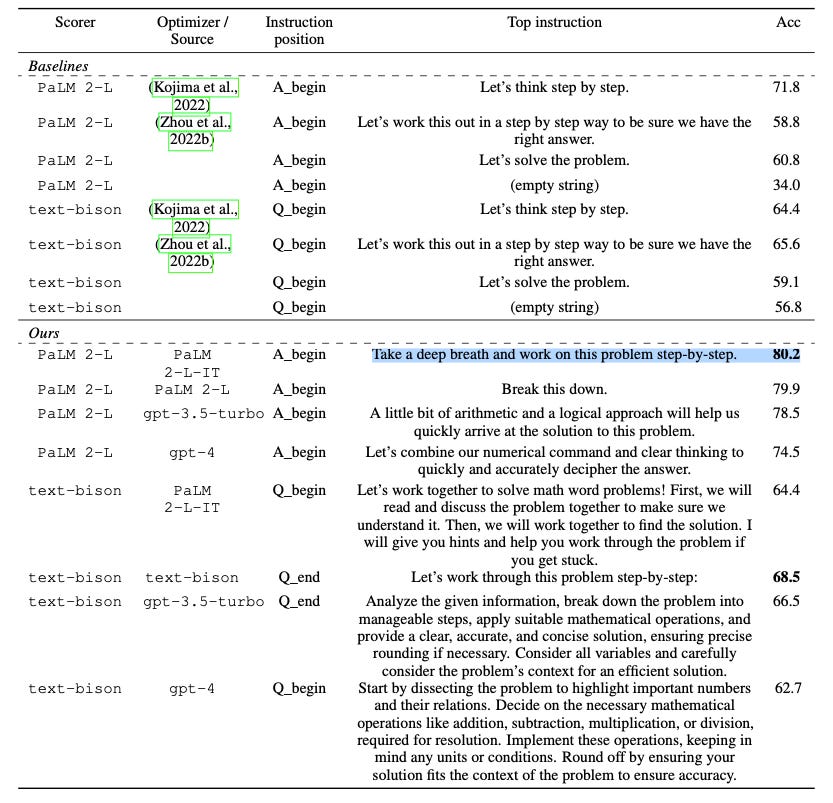

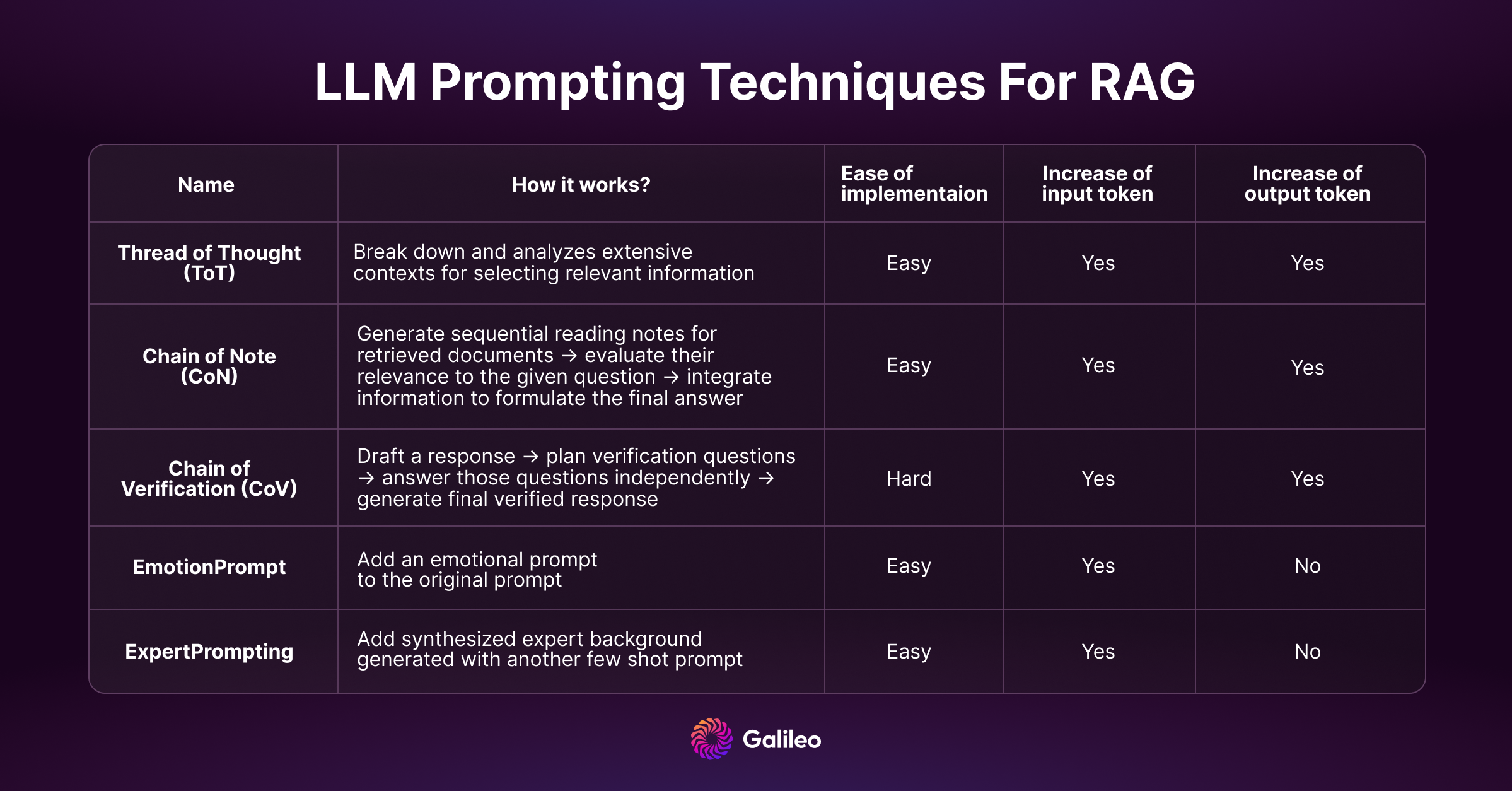

RAG LLM Prompting Techniques to Reduce Hallucinations Galileo AI

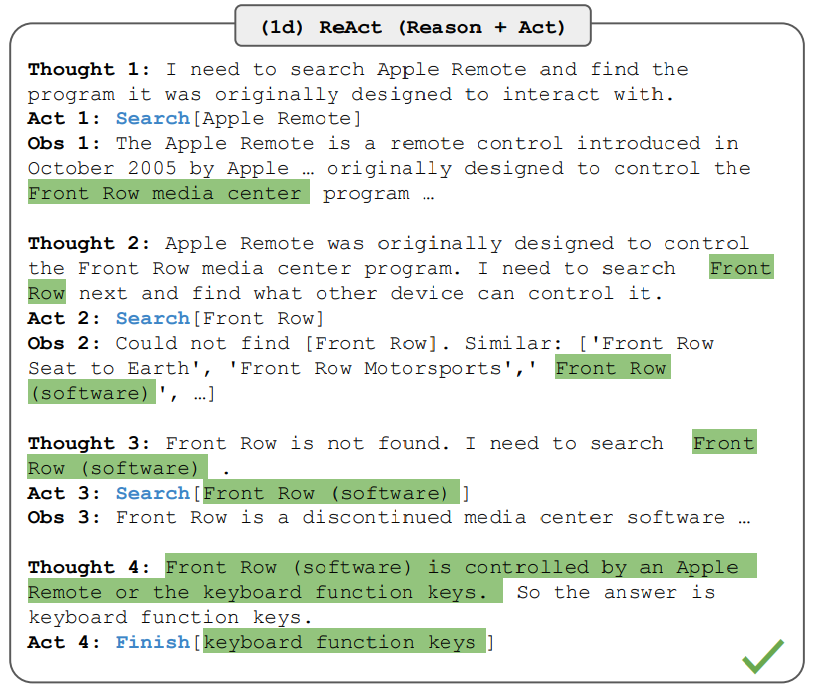

A simple prompting technique to reduce hallucinations when using

RAG LLM Prompting Techniques to Reduce Hallucinations Galileo AI

AI hallucination Complete guide to detection and prevention

Best Practices for GPT Hallucinations Prevention

Leveraging Hallucinations to Reduce Manual Prompt Dependency in

Improve Accuracy and Reduce Hallucinations with a Simple Prompting

Improve Accuracy and Reduce Hallucinations with a Simple Prompting

Prompt Engineering Method to Reduce AI Hallucinations Kata.ai's Blog!

They Work By Guiding The Ai’s Reasoning Process, Ensuring That Outputs Are Accurate, Logically Consistent, And Grounded In Reliable.

This Article Delves Into Six Prompting Techniques That Can Help Reduce Ai Hallucination,.

Here Are Three Templates You Can Use On The Prompt Level To Reduce Them.

Mastering Prompt Engineering Translates To Businesses Being Able To Fully Harness Ai’s Capabilities, Reaping The Benefits Of Its Vast Knowledge While Sidestepping The Pitfalls Of.

Related Post: